From Prototype to Production: Operationalizing AI Agents

Most enterprise AI journeys follow the same arc: a compelling demo, a funded pilot—and then a quiet stall before anything reaches production. The gap isn't a technology problem. It's an operationalization problem. The moment an agent can take actions—not just generate text—you need governance, architecture, and operations that treat it as a digital teammate, not a glorified chatbot.

Role, Not Technology

- Define agents by job title

- Follow the Observer → Assistant → Orchestrator path

Departments, Not Monoliths

- Arbiter + specialist agents

- Knowledge · Decision · Execution taxonomy

Identity Is the Perimeter

- Scoped permissions per agent

- HITL triggers + full traceability

A New Ops Discipline

- Behavioral testing over unit tests

- Cost per outcome as a metric

Outcomes Over Instructions

- Define intent, not steps

- Architecture as guardrails

1. Strategy: Define Agents by Role, Not Technology

Think in job titles, not capabilities. Don't build a "PDF Summarizer"—build a "Compliance Auditor" that happens to read PDFs. Framing agents around functional roles keeps them solving real business bottlenecks rather than performing generic tasks in search of a use case.

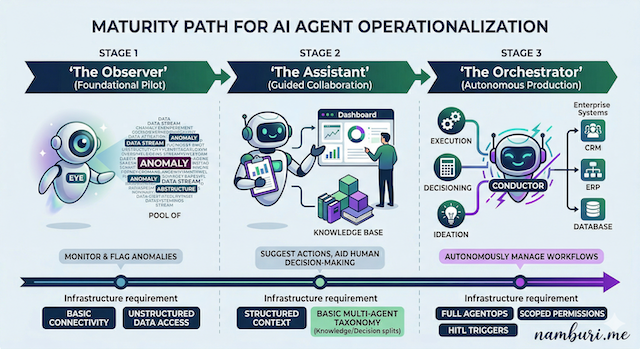

Follow a maturity path. Skipping stages is how you end up with an autonomous agent that confidently sends the wrong email to 10,000 customers:

- Observer agents — monitor and flag anomalies without taking action

- Assistant agents — suggest actions and support human decisions

- Orchestrator agents — autonomously manage workflows and coordinate other agents

2. Architecture: Think Departments, Not Monoliths

A single agent that does everything—research, decide, execute, report—is brittle and impossible to debug. Use a Multi-Agent System where an Arbiter delegates to specialists, each communicating through standardised protocols like MCP rather than custom glue.

A functional taxonomy keeps failures locatable:

- Knowledge agents — retrieve, synthesise, and maintain context

- Decision agents — evaluate options against policy and risk thresholds

- Execution agents — take action in external systems

Knowledge agents are only as reliable as the data beneath them. A unified Knowledge Core spanning operational and analytical layers is what gives them accurate, source-attributed context.

3. Governance: Identity Is the New Perimeter

The biggest risk in an agentic system isn't an external attacker—it's an agent with too much power and too little oversight. Governance has three non-negotiables:

Scoped permissions. Every agent needs its own verifiable identity and a least-privilege permission set. If it reads documents, it can't delete them. Scoped permissions limit the blast radius when something goes wrong.

HITL triggers. Autonomy is a dial, not a switch. When confidence on a high-stakes decision drops below threshold, the agent pauses and escalates—it doesn't guess and proceed.

Traceability. Every reasoning step, tool call, and action must be logged and legible to non-engineers. When someone asks "why did the agent do that?"—you need an answer ready.

4. AgentOps: A New Operational Discipline

Agents are non-deterministic—behaviour varies with context, model state, and available tools. Traditional DevOps isn't designed for that. Three practices close the gap:

- Behavioural testing. Validate intent, not just output. Cover adversarial scenarios and failure conditions, not just the happy path.

- Continuous tuning. Use traces to find where agents get confused or wasteful, then refine prompts or retire underperformers.

- Cost as a metric. An agent that takes 50 steps to do what a human does in two isn't production-ready. Track token cost and steps-per-outcome alongside accuracy.

5. Engineering Culture: Outcomes Over Instructions

Stop writing steps. Start defining outcomes. Specify what the agent must achieve, the boundaries it must stay within, and when to escalate—then let it determine the how. Engineers become managers briefing a capable employee, not programmers writing a script.

Architecture shifts accordingly: instead of dictating every step, it provides guardrails, fallbacks, and retry logic that keep autonomous reasoning safe within defined boundaries.

The Bottom Line

Operationalizing AI agents is not simply a technical upgrade. It's a new way of designing, deploying, and managing software—one that requires new mental models at every level of the organization, from engineering to legal to executive leadership.