Beyond the Hype: Why AI TRiSM is the Secret to Scaling Enterprise AI

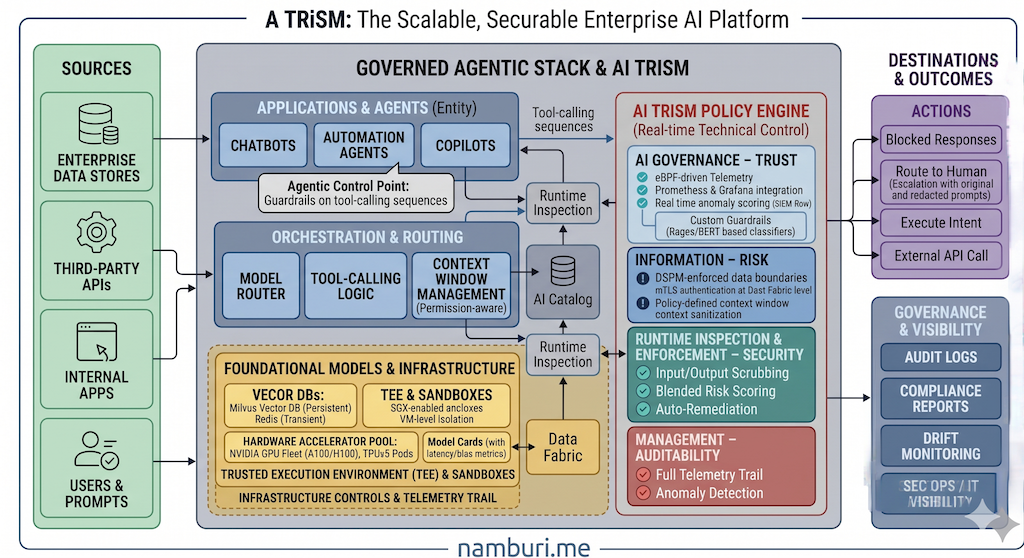

The era of "experimenting" with AI is officially over. While hallucination gets most of the headlines, the real threats keeping CISOs and CIOs awake are data leaks, algorithmic bias, and "Shadow AI" operating outside the sight of IT. AI TRiSM (Trust, Risk, and Security Management), coined by Gartner, isn't just another compliance checklist. It's the operational framework required to turn AI from a risky experiment into a reliable enterprise powerhouse.

AI Governance

- Centralised model catalog

- Model Cards & Bills of Materials

Information Governance

- DSPM-enforced data boundaries

- Permission-aware context windows

Runtime Inspection

- Real-time risk scoring

- Auto-remediation & human escalation

Infrastructure Controls

- Trusted Execution Environments

- Full telemetry trail for auditability

The "Silent" Threat: Why Traditional Security Fails

Traditional cybersecurity was built to protect static data and fixed perimeters. AI introduces dynamic failure modes that those architectures were never designed to handle.

Policy Violations, Not Hackers

80% of unauthorised AI transactions are projected to be caused by internal policy violations—employees oversharing sensitive data with AI tools—rather than external attackers.

The Invisible Attack Surface

GenAI traffic is surging in triple figures. Without a central inventory, companies have no idea which "Copilots" their employees are feeding sensitive data into.

Adversarial Input Manipulation

Models can be "tricked" into bypassing safety filters or executing unintended actions by embedding malicious instructions in user inputs. Training data extraction is a separate but related attack vector.

Third-Party & Model Exposure

Calling external APIs means sensitive prompts transit third-party infrastructure. A poisoned or backdoored model weight file can be as damaging as a compromised software library. Vendor lock-in compounds the exposure.

The Business Case: What Does a Breach Actually Cost?

Skeptics often frame TRiSM as overhead. The math says otherwise. The average cost of a data breach reached $4.88M in 2024 (IBM) per incident. A single AI-related incident—a prompt injection that exfiltrates customer PII, or a biased model that triggers a regulatory investigation—can dwarf that figure with added reputational damage and remediation costs.

A phased TRiSM implementation, by contrast, typically starts with existing DSPM and monitoring tooling already licensed. The incremental cost of adding AI-specific policy engines and a model catalog is a fraction of a single incident response retainer. Put simply: TRiSM is cheap insurance against an expensive class of failure.

The Four Pillars of a Robust TRiSM Architecture

To manage these risks, AI TRiSM moves security from a "once-a-year audit" to a real-time technical control. Each pillar maps to a letter of the acronym, giving every layer a clear owner and purpose.

AI Governance — Trust (The Blueprint)

Trust begins with visibility. You cannot govern what you haven't cataloged. This layer creates a centralised AI Catalog of every model, application, and agent. By capturing Model Cards and "Bills of Materials," teams establish a baseline of "normal" behaviour to measure against later.

Information Governance — Risk (The Boundaries)

Risk is fundamentally a data problem. AI systems are only as safe as the data they can touch. This pillar uses DSPM (Data Security Posture Management) to ensure an AI agent doesn't accidentally summarise a sensitive M&A document for an employee who lacks the right permissions. Rule #1: Fix the data foundation before you deploy the AI.

Runtime Inspection & Enforcement — Security (The Shield)

This is the "heart" of TRiSM. Policy engines inspect every prompt and response as it transits the system.

- Blended Risk Scoring: The system calculates a risk score and flags anomalies in real-time.

- Auto-Remediation: If a risk score is too high, the system automatically blocks the response or routes it to a human for review.

Infrastructure and Stack — Management (The Foundation)

Long-term governance requires a secure, auditable foundation. Trusted Execution Environments and sandboxes isolate model workloads. Every interaction must leave a telemetry trail for auditability—ensuring that if a model "drifts" or begins producing biased results, there is a clear record of why and when it happened.

TRiSM in Action: Models vs. Agents

The framework adapts based on what you are deploying. The controls differ meaningfully across the three entity types:

| Entity | Primary Risk | TRiSM Control |

|---|---|---|

| Models | Prompt Injections | Input/Output scrubbing |

| Applications | Data Oversharing | Context-window permissioning |

| Agents | Unanticipated Actions | "Guardrails" on tool-calling sequences |

From Theory to Practice: How to Start

Implementing AI TRiSM doesn't have to happen all at once. The most successful organisations follow a phased approach:

Clean the House

Use automated discovery tools to find "Shadow AI" and fix data classifications. You can't govern what you can't see.

Build the Catalog

Document high-risk models first—especially those in Finance or Healthcare. Model Cards and Bills of Materials belong in version control, not wikis.

Deploy Runtime Monitoring

Use tools like Amazon SageMaker Model Monitor, Fiddler AI, or Prisma AIRS to track accuracy and data drift in production.

Red-Team Models

Before any high-risk model goes to production, run structured adversarial testing—probe for prompt injection, data extraction, and policy bypass. Increasingly required under the EU AI Act for high-risk systems.

Align the Human Layer

Connect SecOps with legal and AI engineering to create clear escalation paths for when an AI misbehaves. Governance without human accountability is theatre.

TRiSM in the Governed Agentic Stack

TRiSM doesn't operate in isolation. In a production agentic architecture, agents act on behalf of users—browsing data stores, calling APIs, and writing to systems of record. Without runtime inspection at each tool-calling step, an agent can escalate its own permissions, exfiltrate data through a side channel, or execute an action that was never intended by the user who issued the original prompt.

This is why TRiSM's "Guardrails on tool-calling sequences" specifically addresses agentic systems. In an architecture shaped by an AI-first data strategy, the policy engine becomes a governance control point embedded in the orchestration layer—not a perimeter to walk around. For teams already operating enterprise AI agents in production, adding runtime inspection is the single highest-leverage security investment available today.

The Cost of Delaying AI TRiSM Implementation

In a world governed by the EU AI Act and increasing scrutiny from the FTC, trust is no longer a "nice-to-have"—it is a competitive advantage. AI TRiSM provides the visibility to see AI, the insight to understand its risk, and the real-time controls to govern it before regulators or adversaries force a response.

The AI race isn't won by the team with the biggest model—it's won by the team whose AI doesn't make the front page for the wrong reasons. Reliability, auditability, and safety aren't constraints on AI ambition. They are the AI ambition.